[ECC DS 3주차] 1. Start Here: A Gentle Introduction

0. Introduction: Home Credit Default Risk Competition

-

고객이 대출금을 상환할 것인지 또는 어려움을 겪을 것인지를 예측하는 것은 매우 중요한 비즈니스 요구사항임

-

대회 목표: 과거 대출 신청 데이터를 사용하여 신청자가 대출금을 상환할 수 있는지 여부를 예측

- 전형적인 분류(Classification) 문제임

-

Supervised

-

label은 train data에 포함되어 있음

-

모델이 feature를 통해 label을 예측하도록 하는 훈련하는 것

-

-

Classification

-

label은 binary 변수임

-

0(대출을 제때 상환) vs 1(대출을 상환하는 데 어려움이 있음)

-

0-1. Data 구조 파악하기

-

데이터는 Home Credit에 의해 제공됨

- 은행에 가입하지 않은 사람들에게 신용대출(신용대출)을 제공하는 서비스

a) 데이터 소스

1. application_train/application_test

-

Home Credit의 각 대출 신청에 대한 정보가 포함된 주요 train/test 데이터

-

모든 대출에는 고유한 행이 있으며

SK_ID_CURR피처로 식별됨 -

train 데이터에는

target이 함께 제공됨-

0: 대출금 상환

-

1: 대출금 미상환

-

2. bureau

-

다른 금융 기관으로부터의 고객의 이전 신용에 관한 데이터

-

각 이전 크레딧에는 고유한 행이 지정되어 있지만, 응용 프로그램 데이터에서 하나의 대출이 여러 개의 이전 크레딧을 가질 수 있음

3. bureau_balance

-

사무국의 이전 학점에 대한 월별 데이터

-

각 행은 이전 크레딧의 한 달이며, 이전 크레딧 하나에는 크레딧 길이의 각 달에 하나씩 여러 행이 있을 수 있음

4. previous_application

-

신청 데이터에 대출이 있는 고객이 Home Credit에서 대출을 위해 이전에 한 신청

-

애플리케이션 데이터의 각 현재 대출에는 여러 개의 이전 대출이 있을 수 있음

-

각 이전 응용 프로그램에는 하나의 행이 있으며

SK_ID_PREVfeature로 식별됨

5. POS_CASH_BALANCE

-

고객이 Home Credit에서 보유한 이전 판매 시점 또는 현금 대출에 대한 월별 데이터

-

각 행은 이전 판매 시점 또는 현금 대출의 한 달이며, 하나의 이전 대출에는 여러 행이 있을 수 있음

6. credit_card_balance

-

고객들이 홈 크레딧에 가지고 있던 이전 신용카드에 대한 월별 데이터

-

각 행은 신용카드 잔액의 한 달이며, 하나의 신용카드는 여러 행을 가질 수 있음

7. installments_payment

-

Home Credit의 이전 대출에 대한 지불 내역

-

결제 승인 때마다 하나의 행이 있고 결제 미승인 때마다 하나의 행이 있음

(+)

-

모든 열에 대한 정의가 포함된

HomeCredit_columns_description.csv파일 -

제출 파일 예시

b) 이미지 구조도

0-2. 평가 지표: ROC AUC

-

일단 데이터를 파악하면column descriptions를 읽어보는 것이 도움됨

-

해당 대회에서는 분류 평가 지표로 잘 알려진 ROC-AUC를 활용

-

Receiver Operating Characteristic Area Under the Curve (ROC-AUC/AUROC)

-

TP(True Positive/ 진양성)의 비율 대 FP(False Positive. 위양성) 비율을 그래프로 표시

-

그래프의 각 선은 단일 모형에 대한 곡선을 나타내며, 각 선을 따라 이동하면 양의 인스턴스(instance)를 분류하는 데 사용되는 임계값이 변경됨을 나타냄

-

임계값(threshold)은 오른쪽 상단에서 0에서 시작하여 왼쪽 하단의 1로 이동

-

왼쪽에 있고 다른 곡선 위에 있는 곡선은 더 나은 모형을 나타냄

- 파란색 모델이 초록색 모델보다 낫고, 이는 단순한 무작위 추측 모델을 나타내는 빨간색 대각선보다 낫다.

-

단순히 ROC 곡선 아래의 면적을 의미

- 곡선의 적분값을 의미

-

0 ~ 1의 값을 가지며 높을수록 더 좋은 모델이라고 평가

-

랜덤으로 단순히 추측하는 모형의 ROC-AUC는 0.5

-

ROC- AUC에 따라 classifier(분류기)를 측정할 때 예측을 0 또는 1로 생성하는 것이 아닌(=> 이분법적으로 답을 정하는 것이 아닌) 0과 1 사이의 확률을 생성

- 클래스가 불균형한(imbalanced) 분포를 띄는 경우 정확도(accuracy)가 최상의 측정 기준이 아님

-

EX>

99.9999%의 정확도로 테러리스트를 탐지할 수 있는 모델을 만들고 싶다면, 모든 사람이 테러리스트가 아니라고 예측해 버리면 일단 정확도는 높게 나온다.

-

이러한 상황에 대응하기 위해 ROC- AUC 또는 F1 점수와 같은 고급 metric을 활용

- ROC AUC가 높은 모델도 정확도가 높지만 ROC AUC가 모델 성능을 더 잘 나타냄.

0-3. 참고할 만한 노트북

1. 라이브러리 & 데이터 준비하기

1-1. 라이브러리 & module

### for 데이터 가공

import numpy as np

import pandas as pd

### 범주형 변수 encoder

from sklearn.preprocessing import LabelEncoder

### 파일 시스템 제어

import os

import warnings

warnings.filterwarnings('ignore')

### 시각화 라이브러리

import matplotlib.pyplot as plt

import seaborn as sns

1-2. 데이터 가져오기

-

사용 가능한 모든 데이터 파일

-

train을 위한 main 파일(target ⭕)

-

test를 위한 main 파일(target ❌)

-

제출용 예시 파일

-

각 대출에 대한 추가 정보가 포함된 6개의 기타 파일

-

from google.colab import drive

drive.mount('/content/drive')

Mounted at /content/drive

# 사용 가능한 데이터의 목록 확인

print(os.listdir('/content/drive/MyDrive/Colab Notebooks/ECC 48기 데과B/3주차/data'))

['HomeCredit_columns_description.csv', 'POS_CASH_balance.csv', 'application_test.csv', 'application_train.csv', 'bureau.csv', 'bureau_balance.csv', 'credit_card_balance.csv', 'installments_payments.csv', 'previous_application.csv', 'sample_submission.csv']

# 학습용 데이터

app_train = pd.read_csv('/content/drive/MyDrive/Colab Notebooks/ECC 48기 데과B/3주차/data/application_train.csv')

print('Training data shape: ', app_train.shape)

Training data shape: (307511, 122)

app_train.head()

| SK_ID_CURR | TARGET | NAME_CONTRACT_TYPE | CODE_GENDER | FLAG_OWN_CAR | FLAG_OWN_REALTY | CNT_CHILDREN | AMT_INCOME_TOTAL | AMT_CREDIT | AMT_ANNUITY | ... | FLAG_DOCUMENT_18 | FLAG_DOCUMENT_19 | FLAG_DOCUMENT_20 | FLAG_DOCUMENT_21 | AMT_REQ_CREDIT_BUREAU_HOUR | AMT_REQ_CREDIT_BUREAU_DAY | AMT_REQ_CREDIT_BUREAU_WEEK | AMT_REQ_CREDIT_BUREAU_MON | AMT_REQ_CREDIT_BUREAU_QRT | AMT_REQ_CREDIT_BUREAU_YEAR | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 100002 | 1 | Cash loans | M | N | Y | 0 | 202500.0 | 406597.5 | 24700.5 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 |

| 1 | 100003 | 0 | Cash loans | F | N | N | 0 | 270000.0 | 1293502.5 | 35698.5 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 100004 | 0 | Revolving loans | M | Y | Y | 0 | 67500.0 | 135000.0 | 6750.0 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 100006 | 0 | Cash loans | F | N | Y | 0 | 135000.0 | 312682.5 | 29686.5 | ... | 0 | 0 | 0 | 0 | NaN | NaN | NaN | NaN | NaN | NaN |

| 4 | 100007 | 0 | Cash loans | M | N | Y | 0 | 121500.0 | 513000.0 | 21865.5 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

5 rows × 122 columns

- train 데이터에는 307511개의 관측치(각각 별도의 대출)와 target(예측하고자 하는 레이블)```을 포함한 122개의 feature(변수)가 존재

# 평가용 데이터

app_test = pd.read_csv('/content/drive/MyDrive/Colab Notebooks/ECC 48기 데과B/3주차/data/application_test.csv')

print('Testing data shape: ', app_test.shape)

Testing data shape: (48744, 121)

app_test.head()

| SK_ID_CURR | NAME_CONTRACT_TYPE | CODE_GENDER | FLAG_OWN_CAR | FLAG_OWN_REALTY | CNT_CHILDREN | AMT_INCOME_TOTAL | AMT_CREDIT | AMT_ANNUITY | AMT_GOODS_PRICE | ... | FLAG_DOCUMENT_18 | FLAG_DOCUMENT_19 | FLAG_DOCUMENT_20 | FLAG_DOCUMENT_21 | AMT_REQ_CREDIT_BUREAU_HOUR | AMT_REQ_CREDIT_BUREAU_DAY | AMT_REQ_CREDIT_BUREAU_WEEK | AMT_REQ_CREDIT_BUREAU_MON | AMT_REQ_CREDIT_BUREAU_QRT | AMT_REQ_CREDIT_BUREAU_YEAR | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 100001 | Cash loans | F | N | Y | 0 | 135000.0 | 568800.0 | 20560.5 | 450000.0 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 100005 | Cash loans | M | N | Y | 0 | 99000.0 | 222768.0 | 17370.0 | 180000.0 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 3.0 |

| 2 | 100013 | Cash loans | M | Y | Y | 0 | 202500.0 | 663264.0 | 69777.0 | 630000.0 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 | 4.0 |

| 3 | 100028 | Cash loans | F | N | Y | 2 | 315000.0 | 1575000.0 | 49018.5 | 1575000.0 | ... | 0 | 0 | 0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 3.0 |

| 4 | 100038 | Cash loans | M | Y | N | 1 | 180000.0 | 625500.0 | 32067.0 | 625500.0 | ... | 0 | 0 | 0 | 0 | NaN | NaN | NaN | NaN | NaN | NaN |

5 rows × 121 columns

- test data가 더 작고, target 값이 존재하지 않는다.

2. 탐색적 데이터 분석(EDA/ Exploratory Data Analysis)

-

통계를 계산하고 데이터 내의 추세, 이상 징후, 패턴 또는 관계를 찾기 위해 수치를 만드는 개방형 프로세스

-

목표: 데이터가 제공하는 정보들을 파악하는 것

-

일반적으로 포괄적인 수준의 개요로 시작한 다음 데이터의 흥미로운 부분을 찾음에 따라 특정 영역으로 좁혀나가는 방식

-

연구 결과는 자체적으로 흥미로울 수도 있고, 사용할 feature를 결정하는 데 도움을 주는 등 모델링 시의 선택 사항을 알리는 데 사용될 수도 있음

2-1. Target 값의 분포 확인하기

-

목표: 0(대출금을 제 때 상환) vs 1(고객이 지불에 어려움을 겪었음) 중 하나를 예측

-

각 범주에 속하는 대출의 수를 조사할 수 있음

app_train['TARGET'].value_counts()

0 282686 1 24825 Name: TARGET, dtype: int64

app_train['TARGET'].astype(int).plot.hist()

<Axes: ylabel='Frequency'>

-

매우 불균형한(imbalanced) 클래스임을 파악할 수 있음

- 제때 상환된 대출(0)이 상환되지 않은 대출(1)보다 훨씬 많음

-

더 정교한 기계 학습 모델링을 적용한다면, 이 불균형을 반영하기 위해 데이터에서의 표현으로 클래스에 가중치를 부여할 수 있음

2-2. 결측치(Missing Value) 확인

- 각 열의 결측값의 수와 비율 파악

### column# 함수를 기준으로 결측값을 계산하는 함수

def missing_values_table(df):

# 데이터 내에서 결측치의 총 개수

mis_val = df.isnull().sum()

# 전체 데이터에 대한 결측치의 비율

mis_val_percent = 100 * df.isnull().sum() / len(df)

# 결과 테이블 만들기

mis_val_table = pd.concat([mis_val, mis_val_percent], axis = 1) # 컬럼 방향으로 결합

# 컬럼명 재정의

mis_val_table_ren_columns = mis_val_table.rename(

columns = {0 : 'Missing Values', 1 : '% of Total Values'})

# 결측치의 비율을 기준으로 내림차순 정렬

mis_val_table_ren_columns = mis_val_table_ren_columns[

mis_val_table_ren_columns.iloc[:,1] != 0].sort_values(

'% of Total Values', ascending = False).round(1) # 소수 첫째자리에서 반올림

# 요약 정보 출력

print ("Your selected dataframe has " + str(df.shape[1]) + " columns.\n"

"There are " + str(mis_val_table_ren_columns.shape[0]) +

" columns that have missing values.")

return mis_val_table_ren_columns

# 결측치에 대한 요약 정보 확인

missing_values = missing_values_table(app_train)

missing_values.head(20)

Your selected dataframe has 122 columns. There are 67 columns that have missing values.

| Missing Values | % of Total Values | |

|---|---|---|

| COMMONAREA_MEDI | 214865 | 69.9 |

| COMMONAREA_AVG | 214865 | 69.9 |

| COMMONAREA_MODE | 214865 | 69.9 |

| NONLIVINGAPARTMENTS_MEDI | 213514 | 69.4 |

| NONLIVINGAPARTMENTS_MODE | 213514 | 69.4 |

| NONLIVINGAPARTMENTS_AVG | 213514 | 69.4 |

| FONDKAPREMONT_MODE | 210295 | 68.4 |

| LIVINGAPARTMENTS_MODE | 210199 | 68.4 |

| LIVINGAPARTMENTS_MEDI | 210199 | 68.4 |

| LIVINGAPARTMENTS_AVG | 210199 | 68.4 |

| FLOORSMIN_MODE | 208642 | 67.8 |

| FLOORSMIN_MEDI | 208642 | 67.8 |

| FLOORSMIN_AVG | 208642 | 67.8 |

| YEARS_BUILD_MODE | 204488 | 66.5 |

| YEARS_BUILD_MEDI | 204488 | 66.5 |

| YEARS_BUILD_AVG | 204488 | 66.5 |

| OWN_CAR_AGE | 202929 | 66.0 |

| LANDAREA_AVG | 182590 | 59.4 |

| LANDAREA_MEDI | 182590 | 59.4 |

| LANDAREA_MODE | 182590 | 59.4 |

-

기계 학습 모델을 구축할 때가 되면 이러한 누락된 값을 채워야 함(결측이라고 함)

-

이후 작업에서는 XGBoost와 같은 imputation 할 필요 없이 결측값을 계산할 수 있는 모델을 활용할 예정

-

결측값의 비율이 높은 열을 삭제하는 것도 방법임

-

하지만 해당 열이 모형에 도움이 되는지 여부를 미리 알 수 X

-

현재 모든 column을 유지

-

2-3. Column의 형태

# 컬럼들의 타입별 분포

app_train.dtypes.value_counts()

float64 65 int64 41 object 16 dtype: int64

- 각

object형(범주형) 컬럼들의 고유 항목 수

# 각 object 컬럼의 고유 클래스 수

app_train.select_dtypes('object').apply(pd.Series.nunique, axis = 0)

NAME_CONTRACT_TYPE 2 CODE_GENDER 3 FLAG_OWN_CAR 2 FLAG_OWN_REALTY 2 NAME_TYPE_SUITE 7 NAME_INCOME_TYPE 8 NAME_EDUCATION_TYPE 5 NAME_FAMILY_STATUS 6 NAME_HOUSING_TYPE 6 OCCUPATION_TYPE 18 WEEKDAY_APPR_PROCESS_START 7 ORGANIZATION_TYPE 58 FONDKAPREMONT_MODE 4 HOUSETYPE_MODE 3 WALLSMATERIAL_MODE 7 EMERGENCYSTATE_MODE 2 dtype: int64

- 대부분의 범주형 변수에는 비교적 적은 수의 고유 항목이 존재

2-4. 범주형 변수 Encoding

-

기계 학습 모델은 범주형 변수를 처리할 수 없음

-

LightGBM 과 같은 일부 모델 제외

-

모델에 적용하기 전에 이러한 변수를 숫자로 인코딩(표현) 해주어야 함

-

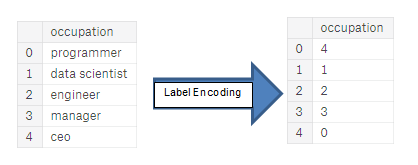

1. 레이블 인코딩

-

범주형 변수의 각 고유 범주에 정수를 할당

-

새로운 column이 생성되지 않음

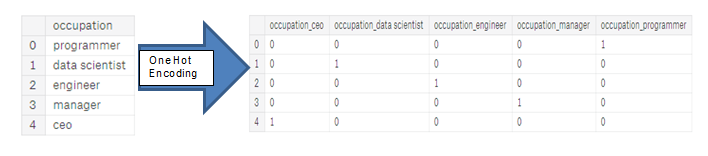

2. One-hot 인코딩

-

범주형 변수의 각 고유 범주에 대해 새로운 column을 생성

-

각 관측치는 해당 범주에 대한 열의 값으로 1을 저장하고 다른 모든 새로운 열에는 0을 저장

a) Label Encoding과 One-Hot Encoding

-

고유한 범주가 2개인 범주형 변수(

dtype == object)의 경우 레이블 인코딩을 사용하고, 고유한 범주가 2개 이상인 범주형 변수의 경우 원-핫 인코딩을 사용 -

레이블 인코딩의 경우 Scikit-Learn의

LabelEncoder를 사용하고, 원-핫 인코딩의 경우 pandas의get_dummies(df)함수를 사용

### LabelEncoding 객체 생성

le = LabelEncoder()

le_count = 0

# 열들을 하나씩 탐색하면서..

for col in app_train:

if app_train[col].dtype == 'object': # 범주형 변수이면

if len(list(app_train[col].unique())) <= 2:

### LabelEncoding

le.fit(app_train[col]) # Encoder 학습

# 데이터 변형

app_train[col] = le.transform(app_train[col])

app_test[col] = le.transform(app_test[col])

le_count += 1

print('%d columns were label encoded.' % le_count)

3 columns were label encoded.

# 인코딩되지 않은 나머지 범주형 변수들 -> One-hot Encoding

app_train = pd.get_dummies(app_train)

app_test = pd.get_dummies(app_test)

print('Training Features shape: ', app_train.shape)

print('Testing Features shape: ', app_test.shape)

Training Features shape: (307511, 243) Testing Features shape: (48744, 239)

b) Train/ Test 데이터 조정

-

train 및 test 데이터 모두에 동일한 변수(feature)가 있어야 함

-

test 데이터에 범주가 표시되지 않은 일부 범주형 변수가 있었기 때문에 one - hot 인코딩으로 인해 train 데이터에 더 많은 열이 생성되었음

-

테스트 데이터에 없는 train 데이터의 열을 제거하려면 데이터 프레임을 정렬해야 함

-

먼저 train 데이터에서 대상 열을 추출

- 테스트 데이터에는 없지만 해당 정보를 유지해야 하기 때문에

-

정렬을 수행할 때 행이 아닌 열을 기준으로 데이터 프레임을 정렬하도록

axis = 1을 설정해야 함

-

train_labels = app_train['TARGET'] # target 값

### train/test 데이터 정렬

# 일단 train/test 내의 열을 맞추기 위해 test 데이터에 있는 열들 기준으로만 맞추기

app_train, app_test = app_train.align(app_test, join = 'inner', axis = 1)

# train 데이터 뒤에는 target값을 다시 결합

app_train['TARGET'] = train_labels

print('Training Features shape: ', app_train.shape)

print('Testing Features shape: ', app_test.shape)

Training Features shape: (307511, 240) Testing Features shape: (48744, 239)

-

train과 test 데이터가 동일한 feature들을 가지게 됨(target 변수는 제외)

-

one - hot 인코딩으로 인해 feature의 수가 크게 증가

- 데이터 세트의 크기를 줄이기 위해 상관성 감소(관련 없는 기능 감소) )를 시도해 볼 수 있음

2-5. 부가적인 EDA 진행하기

a) 이상치(anomaly)

-

EDA를 수행할 때 데이터 내의 이상치를 주의해야 함

- 숫자를 잘못 입력했거나 측정 장비에 오류가 있거나 유효하지만 극단적인 측정일 수 있음

-

describe()함수를 통해 해당 열의 통계를 살펴보는 것으로 이상치를 탐지할 수 있음 -

DAYS_BIRTH컬럼에 있는 숫자는 현재 대출 신청과 관련하여 기록되기 때문에 음수임- 통계를 년 단위로 보려면 -1을 곱하고 1년의 일 수(365일)로 나눠서 구할 수 있음

(app_train['DAYS_BIRTH'] / -365).describe()

count 307511.000000 mean 43.936973 std 11.956133 min 20.517808 25% 34.008219 50% 43.150685 75% 53.923288 max 69.120548 Name: DAYS_BIRTH, dtype: float64

- 상한 또는 하한에 연령에 대한 이상치가 없는 것으로 보인다.

app_train['DAYS_EMPLOYED'].describe()

count 307511.000000 mean 63815.045904 std 141275.766519 min -17912.000000 25% -2760.000000 50% -1213.000000 75% -289.000000 max 365243.000000 Name: DAYS_EMPLOYED, dtype: float64

-

최대값은 약 1000년..?!

- 이상치가 존재한다.

### 데이터 분포 확인하기

app_train['DAYS_EMPLOYED'].plot.hist(title = 'Days Employment Histogram');

plt.xlabel('Days Employment');

- 비정상적인 클라이언트의 하위 집합을 설정하여 다른 클라이언트보다 기본값 비율이 높거나 낮은 경향이 있는지 확인해보자.

anom = app_train[app_train['DAYS_EMPLOYED'] == 365243]

non_anom = app_train[app_train['DAYS_EMPLOYED'] != 365243]

print('The non-anomalies default on %0.2f%% of loans' % (100 * non_anom['TARGET'].mean()))

print('The anomalies default on %0.2f%% of loans' % (100 * anom['TARGET'].mean()))

print('There are %d anomalous days of employment' % len(anom))

The non-anomalies default on 8.66% of loans The anomalies default on 5.40% of loans There are 55374 anomalous days of employment

- 이상치로 여겨지는 고객들의 채무 불이행 비율이 더 낮은 것으로 나타남

✔ 이상치 처리

-

가장 안전한 접근법 중 하나는 기계 학습 전에 이상치를 결측값으로 설정한 다음 값을 채워넣는 방법임

- 모두 공통적인 어떤 값으로 가정해 동일한 값으로 해당 값을 채움

-

여기서는 비정상적인 값을 가지는 데이터에 대해

np.nan으로 결측치로 값을 변경 후, 값의 비정상 유뮤를 나타내는 bool 칼럼을 새로 생성

# flag 컬럼 생성 -> 이상치 유무 저장

app_train['DAYS_EMPLOYED_ANOM'] = app_train["DAYS_EMPLOYED"] == 365243

# 이상치를 np.nan으로

app_train['DAYS_EMPLOYED'].replace({365243: np.nan}, inplace = True)

app_train['DAYS_EMPLOYED'].plot.hist(title = 'Days Employment Histogram');

plt.xlabel('Days Employment');

-

분포는 우리가 예상하는 것과 훨씬 더 비슷한 것으로 판단되며, 이러한 값이 원래 비정상임을 모델에 알려주는 새로운 컬럼(

DAYS_EMPLOYED_ANOM)도 생성함- NaN 값을 아마 column의 중간값(median)으로 채워야 하기 때문

-

이상치를 제외하고는 문제가 없어 보임

app_test['DAYS_EMPLOYED_ANOM'] = app_test["DAYS_EMPLOYED"] == 365243

app_test["DAYS_EMPLOYED"].replace({365243: np.nan}, inplace = True)

print('There are %d anomalies in the test data out of %d entries' % (app_test["DAYS_EMPLOYED_ANOM"].sum(), len(app_test)))

There are 9274 anomalies in the test data out of 48744 entries

b) 상관관계(Correlations)

-

데이터를 이해하고 이해하는 한 가지 방법은 feature와 target 사이의 상관 관계를 찾는 것

-

상관 계수는 feature의 관련성을 나타내는 가장 좋은 방법은 아니지만, 데이터 내에서 가능한 관계에 대한 개념을 제공

-

-

0 ~ 0.19: 매우 약한 상관관계

-

0.20 ~ 0.39: 약한 상관관계

-

0.40 ~ 0.59: 어느 정도의 상관관계

-

0.60 ~ 0.79: 강한 상관관계

-

0.80 ~ 1.0: 매우 강한 상관관계

-

# target과의 상관계수를 구한 후 정렬

correlations = app_train.corr()['TARGET'].sort_values()

print('Most Positive Correlations:\n', correlations.tail(15))

print()

print('\nMost Negative Correlations:\n', correlations.head(15))

Most Positive Correlations: OCCUPATION_TYPE_Laborers 0.043019 FLAG_DOCUMENT_3 0.044346 REG_CITY_NOT_LIVE_CITY 0.044395 FLAG_EMP_PHONE 0.045982 NAME_EDUCATION_TYPE_Secondary / secondary special 0.049824 REG_CITY_NOT_WORK_CITY 0.050994 DAYS_ID_PUBLISH 0.051457 CODE_GENDER_M 0.054713 DAYS_LAST_PHONE_CHANGE 0.055218 NAME_INCOME_TYPE_Working 0.057481 REGION_RATING_CLIENT 0.058899 REGION_RATING_CLIENT_W_CITY 0.060893 DAYS_EMPLOYED 0.074958 DAYS_BIRTH 0.078239 TARGET 1.000000 Name: TARGET, dtype: float64 Most Negative Correlations: EXT_SOURCE_3 -0.178919 EXT_SOURCE_2 -0.160472 EXT_SOURCE_1 -0.155317 NAME_EDUCATION_TYPE_Higher education -0.056593 CODE_GENDER_F -0.054704 NAME_INCOME_TYPE_Pensioner -0.046209 DAYS_EMPLOYED_ANOM -0.045987 ORGANIZATION_TYPE_XNA -0.045987 FLOORSMAX_AVG -0.044003 FLOORSMAX_MEDI -0.043768 FLOORSMAX_MODE -0.043226 EMERGENCYSTATE_MODE_No -0.042201 HOUSETYPE_MODE_block of flats -0.040594 AMT_GOODS_PRICE -0.039645 REGION_POPULATION_RELATIVE -0.037227 Name: TARGET, dtype: float64

-

DAYS_BIRTH가 target과 가장 강한 양의 상관관계를 가짐DAYS_BIRTH는 대출 당시 고객의 나이 -> 음수

-

상관계수는 양수이지만, feature의 실제 값은 음수임

-

즉, 클라이언트가 나이가 들수록 대출 불이행 가능성이 낮아짐(즉, target == 0)

-

feature에 절댓값을 취해 상관관계가 음수가 됨

-

c) 대출 상환(Target)에 나이(Age)가 미치는 영향

app_train['DAYS_BIRTH'] = abs(app_train['DAYS_BIRTH']) # 나이를 구하기 위해 출생일로부터 지난 날을 구함

app_train['DAYS_BIRTH'].corr(app_train['TARGET'])

-0.07823930830982694

-

target과 음의 상관관계를 가짐

- 고객이 나이가 들수록 대출금을 제때 상환하는 경향이 있다는 것을 의미

### 히스토그램 그리기

# 더 자세히 살펴보기 위해 히스토그램으로 시각화

# x축을 연 단위로 지정

# Set the style of plots

plt.style.use('fivethirtyeight')

# 연 단위로 나이의 분포 파악

plt.hist(app_train['DAYS_BIRTH'] / 365, edgecolor = 'k', bins = 25)

plt.title('Age of Client')

plt.xlabel('Age (years)')

plt.ylabel('Count')

Text(0, 0.5, 'Count')

- 연령 분포는 모든 연령대에 이상치가 없다는 것 외에는 별다른 정보를 제공하지 x

### KDE plot 시각화

# target에 대한 연령의 영향을 시각화하기 위해 kernel density estimate plot(KDE)을 시각화

# KDE는 단일 변수의 분포를 보여주며, 좀 더 smooth한 히스토그램 정도로 생각할 수 있음

plt.figure(figsize = (10, 8))

# target = 0(제때 대출 상환함)

sns.kdeplot(app_train.loc[app_train['TARGET'] == 0, 'DAYS_BIRTH'] / 365,

label = 'target == 0')

# target = 1(제때 대출 상환하지 못함)

sns.kdeplot(app_train.loc[app_train['TARGET'] == 1, 'DAYS_BIRTH'] / 365,

label = 'target == 1')

plt.xlabel('Age (years)'); plt.ylabel('Density'); plt.title('Distribution of Ages');

-

target = 1곡선은 나이 범위의 더 젊은 쪽으로 치우처져 있음- 유의한 상관 관계(상관 계수: -0.07)는 아니지만, 대상에 영향을 미치기 때문에 기계 학습 모델에서 유용할 수 있음

### 연령대별 평균적인 대출 상환 실패 정도 파악하기

# 그래프를 만들기 위해 먼저 연령 범주를 각각 5년 단위의 빈으로 잘라내고, 각 빈에 대해 목표값의 평균값을 계산

# 이를 통해 각 연령대별로 상환되지 않은 대출의 비율을 파악

# 다른 dataframe에 존재하는 정보들을 결합

age_data = app_train[['TARGET', 'DAYS_BIRTH']]

age_data['YEARS_BIRTH'] = age_data['DAYS_BIRTH'] / 365

# 나이 데이터 범위 나누기

age_data['YEARS_BINNED'] = pd.cut(age_data['YEARS_BIRTH'],

bins = np.linspace(20, 70, num = 11))

age_data.head(10)

| TARGET | DAYS_BIRTH | YEARS_BIRTH | YEARS_BINNED | |

|---|---|---|---|---|

| 0 | 1 | 9461 | 25.920548 | (25.0, 30.0] |

| 1 | 0 | 16765 | 45.931507 | (45.0, 50.0] |

| 2 | 0 | 19046 | 52.180822 | (50.0, 55.0] |

| 3 | 0 | 19005 | 52.068493 | (50.0, 55.0] |

| 4 | 0 | 19932 | 54.608219 | (50.0, 55.0] |

| 5 | 0 | 16941 | 46.413699 | (45.0, 50.0] |

| 6 | 0 | 13778 | 37.747945 | (35.0, 40.0] |

| 7 | 0 | 18850 | 51.643836 | (50.0, 55.0] |

| 8 | 0 | 20099 | 55.065753 | (55.0, 60.0] |

| 9 | 0 | 14469 | 39.641096 | (35.0, 40.0] |

# bins를 기준으로 groupby하여 연령대 평균 구하기

age_groups = age_data.groupby('YEARS_BINNED').mean()

age_groups

| TARGET | DAYS_BIRTH | YEARS_BIRTH | |

|---|---|---|---|

| YEARS_BINNED | |||

| (20.0, 25.0] | 0.123036 | 8532.795625 | 23.377522 |

| (25.0, 30.0] | 0.111436 | 10155.219250 | 27.822518 |

| (30.0, 35.0] | 0.102814 | 11854.848377 | 32.479037 |

| (35.0, 40.0] | 0.089414 | 13707.908253 | 37.555913 |

| (40.0, 45.0] | 0.078491 | 15497.661233 | 42.459346 |

| (45.0, 50.0] | 0.074171 | 17323.900441 | 47.462741 |

| (50.0, 55.0] | 0.066968 | 19196.494791 | 52.593136 |

| (55.0, 60.0] | 0.055314 | 20984.262742 | 57.491131 |

| (60.0, 65.0] | 0.052737 | 22780.547460 | 62.412459 |

| (65.0, 70.0] | 0.037270 | 24292.614340 | 66.555108 |

### 시각화

plt.figure(figsize = (8, 8))

# Graph the age bins and the average of the target as a bar plot

plt.bar(age_groups.index.astype(str), 100 * age_groups['TARGET'])

# Plot labeling

plt.xticks(rotation = 75); plt.xlabel('Age Group (years)'); plt.ylabel('Failure to Repay (%)')

plt.title('Failure to Repay by Age Group');

-

젊은 지원자들은 대출금을 상환하지 못할 가능성이 더 높음

- 가장 젊은 3개 그룹은 연체율이 10% 이상, 가장 나이가 많은 그룹은 연체율이 5% 미만임

-

젊은 고객들이 대출금을 상환할 가능성이 낮기 때문에, 그들에게 더 많은 가이드나 재무 관리 팁을 제공하는 것이 권장된다.

d) Exterior Sources

-

target과 가장 강한 음의 상관관계를 가진 3개의 변수는EXT_SOURCE_1,EXT_SOURCE_2, 그리고EXT_SOURCE_3임 -

설명에 따르면 해당 feature들은 외부 데이터 소스의 정규화된 점수를 의미

- 수많은 데이터 소스를 사용하여 만든 일종의 누적 신용 등급이라 생각해도 ok

### 상관계수 파악하기

ext_data = app_train[['TARGET', 'EXT_SOURCE_1', 'EXT_SOURCE_2', 'EXT_SOURCE_3', 'DAYS_BIRTH']]

ext_data_corrs = ext_data.corr()

ext_data_corrs

| TARGET | EXT_SOURCE_1 | EXT_SOURCE_2 | EXT_SOURCE_3 | DAYS_BIRTH | |

|---|---|---|---|---|---|

| TARGET | 1.000000 | -0.155317 | -0.160472 | -0.178919 | -0.078239 |

| EXT_SOURCE_1 | -0.155317 | 1.000000 | 0.213982 | 0.186846 | 0.600610 |

| EXT_SOURCE_2 | -0.160472 | 0.213982 | 1.000000 | 0.109167 | 0.091996 |

| EXT_SOURCE_3 | -0.178919 | 0.186846 | 0.109167 | 1.000000 | 0.205478 |

| DAYS_BIRTH | -0.078239 | 0.600610 | 0.091996 | 0.205478 | 1.000000 |

### 시각화

plt.figure(figsize = (8, 6))

# Heatmap

sns.heatmap(ext_data_corrs, cmap = plt.cm.RdYlBu_r, vmin = -0.25,

annot = True, vmax = 0.6)

plt.title('Correlation Heatmap');

-

세 가지

EXT_SOURCEfeature들은 모두 대상과 음의 상관관계를 가짐EXT_SOURCE의 값이 증가할수록 클라이언트가 대출금을 상환할 가능성이 높아짐

-

DAYS_BIRTH가EXT_SOURCE_1과 양의 상관관계가 있음을 알 수 있음- 이 점수의 요인 중 하나가 클라이언트의 연령일 수 있음

### target 값 별로 각 feature의 분포를 시각화

# 각 변수들이 target에 미치는 영향 파악

plt.figure(figsize = (10, 12))

# iterate through the sources

for i, source in enumerate(['EXT_SOURCE_1', 'EXT_SOURCE_2', 'EXT_SOURCE_3']):

# create a new subplot for each source

plt.subplot(3, 1, i + 1)

# 제때 상환됨(target = 0)

sns.kdeplot(app_train.loc[app_train['TARGET'] == 0, source], label = 'target == 0')

# 제때 상환되지 못함(target = 1)

sns.kdeplot(app_train.loc[app_train['TARGET'] == 1, source], label = 'target == 1')

plt.title('Distribution of %s by Target Value' % source)

plt.xlabel('%s' % source); plt.ylabel('Density');

plt.tight_layout(h_pad = 2.5)

-

EXT_SOURCE_3이 대상 값 간에 가장 큰 차이를 보임 -

시각화를 통해 해당 feature들이 신청자가 대출금을 상환할 가능성과 어느 정도 관련이 있다는 것을 분명히 알 수 있음

-

강한 상관관계를 가지는 것은 아님

-

사실 모두 매우 약한 것으로 판단된다.

-

-

이러한 변수는 신청자가 대출금을 제때 상환할지 여부를 예측하는 기계 학습 모델에 유용하게 활용될 가능성이 높음

2-6. Pairs Plot

-

최종 탐색 그래프로

EXT_SOURCE변수들과DAYS_BIRTH변수의 pairplot을 그릴 수 있음 -

단일 변수의 분포뿐만 아니라 여러 변수 간의 상관관계를 파악할 수 있음

# 시각화를 위해 데이터를 복사

plot_data = ext_data.drop(columns = ['DAYS_BIRTH']).copy()

# 연도를 기준으로 한 고객의 연렬대 추가

plot_data['YEARS_BIRTH'] = age_data['YEARS_BIRTH']

# 결측치 제거, 출력은 100000번째 행까지만

plot_data = plot_data.dropna().loc[:100000, :]

### 두 변수 사이의 상관계수를 계산하기 위한 함수

def corr_func(x, y, **kwargs):

r = np.corrcoef(x, y)[0][1] # 상관계수 계산

ax = plt.gca()

ax.annotate("r = {:.2f}".format(r),

xy=(.2, .8), xycoords = ax.transAxes,

size = 10)

### 시각화

# 객체 생성

grid = sns.PairGrid(data = plot_data, diag_sharey=False,

hue = 'TARGET',

vars = [x for x in list(plot_data.columns) if x != 'TARGET'])

# 윗부분: scatter plot

grid.map_upper(plt.scatter, alpha = 0.2)

# 주대각선 부분: kdeplot

grid.map_diag(sns.kdeplot)

# 아래 부분: density plot

grid.map_lower(sns.kdeplot, cmap = plt.cm.OrRd_r);

# 차트의 이름 지정

plt.suptitle('Ext Source and Age Features Pairs Plot', size = 20, y = 1.05);

-

빨간색: 상환되지 않은 대출(target = 1)/ 파란색: 지급된 대출(target = 0)

-

EXT_SOURCE_1와DAYS_BIRTH(또는 이와 동등한YEARS_BIRTH) 사이에는 중간 정도의 양의 선형 관계가 있는 것으로 나타남- 이 기능이 클라이언트의 연령을 고려할 수 있음을 나타냄

3. 특성 공학(Feature Engineering)

-

Kaggle 대회는 Feature Engineering에 의해 우승여부가 결정됨

-

이러한 대회들은 데이터에서 가장 유용한 feature을 생성할 수 있는 사람이 우승

-

구조화된 데이터의 경우 모든 우승 모델이 그래디언트 부스팅의 변형인 경향이 있기 때문에 대부분 맞는 말임

-

-

그리고 이러한 것들은 머신러닝의 패턴 중 하나를 나타냄

-

feature engineering이 모델 구축 및 hyperparameter 조정보다 좋은 결과를 가져오는 것

-

-

올바른 모델과 최적의 설정을 선택하는 것이 중요하지만 모델은 주어진 데이터를 통해서만 학습할 수 있음

-

이 데이터가 가능한 한 작업과 관련이 있는지 확인하는 것이 데이터 과학자의 업무임

-

-

Feature Engineering은 일반적인 프로세스를 말하며,

feature 구성(기존 데이터에서 새로운 feature 추가)과feature 선택(가장 중요한 feature만 선택하거나 다른 차원 축소 방법 사용 등)을 모두 포함함 -

다른 데이터 소스를 사용할 때 많은 feature engineering을 수행하지만 이 노트북에서는 두 가지 간단한 feature 구성 방법만 시도:

-

다항 변수(Polynomial features)

-

도메인 지식 기반 변수(Domain knowledge features)

-

3-1. 다항 변수(Polynomial Features)

-

간단한 feature 구성 방법 중 하나는 polynomial features가 있음

-

기존 feature의 제곱과 기존 feature들 간의 상호 작용을 하는 feature들을 생성

-

예를 들어,

EXT_SOURCE_1^2및EXT_SOURCE_2^2변수와EXT_SOURCE_1 x EXT_SOURCE_2,EXT_SOURCE_1 x EXT_SOURCE_2^2,EXT_SOURCE_1^2 x EXT_SOURCE_2^2등과 같은 변수를 생성할 수 있음

-

-

여러 개별 변수의 조합인 feature들은 변수 간의 상호 작용을 담기 때문에 interaction terms라고 칭함

- 두 개의 변수가 그 자체로는 target에 큰 영향을 미치지 않을 수 있지만, 이들을 하나의 interaction variable로 결합하면 target과 유의미한 관계를 보일 수 있음

-

interaction terms은 여러 변수들의 효과를 담기 위해 통계 모델에서 일반적으로 사용되지만, 머신 러닝에서는 자주 사용되지 않음

- 여기서는 모델이 고객들의 대출 상환 여부를 예측하는데 도움이 될 수 있는지 알아보기 위해 몇 가지를 시도해 볼 예정

-

다음 코드에서는

EXT_SOURCE변수와DAYS_BIRTH변수를 사용하여 polynomial feature를 생성 -

Scikit - learn에는 지정된 degree까지 다항식과 interaction terms을 생성하는

PolynomialFeatures라는 유용한 클래스가 있음- 여기서는

degree = 3을 사용

- 여기서는

-

polynomial feature을 생성할 때 feature의 수가 degree에 따라 기하급수적으로 확장되고, overfitting 문제가 발생할 수 있기에, 너무 높은 degree를 사용하는 것은 권장되지 않음

### polynomial features를 위한 새로운 컬럼 지정

poly_features = app_train[['EXT_SOURCE_1', 'EXT_SOURCE_2', 'EXT_SOURCE_3', 'DAYS_BIRTH', 'TARGET']]

poly_features_test = app_test[['EXT_SOURCE_1', 'EXT_SOURCE_2', 'EXT_SOURCE_3', 'DAYS_BIRTH']]

### 결측치 처리를 위한 Imputer

# Imputer 클래스를 사용하는 경우 에러 -> SimpleImputer로 변경

from sklearn.impute import SimpleImputer

imputer = SimpleImputer(strategy = 'median') # 결측치를 중간값으로 대체

### 다항 변수들

poly_target = poly_features['TARGET']

poly_features = poly_features.drop(columns = ['TARGET'])

### 결측치 처리

poly_features = imputer.fit_transform(poly_features) # 학습 & 변형

## test 데이터의 경우 학습 데이터로 학습된 변환기로 "동일"하게 변형해야 함

poly_features_test = imputer.transform(poly_features_test) # 변형만

### 다항 변수 생성

from sklearn.preprocessing import PolynomialFeatures

poly_transformer = PolynomialFeatures(degree = 3)

### 모델에 다항 변수들 또한 학습시키기

poly_transformer.fit(poly_features)

### feature 변형

poly_features = poly_transformer.transform(poly_features) # 학습 & 변형

poly_features_test = poly_transformer.transform(poly_features_test) # 변형만

print('Polynomial Features shape: ', poly_features.shape)

Polynomial Features shape: (307511, 35)

-

꽤 많은 새로운 feature들이 새로 생성됨

-

feature의 이름을 얻으려면

get_feature_names_out()메서드를 사용

poly_transformer.get_feature_names_out(input_features = ['EXT_SOURCE_1', 'EXT_SOURCE_2', 'EXT_SOURCE_3', 'DAYS_BIRTH'])[:15]

array(['1', 'EXT_SOURCE_1', 'EXT_SOURCE_2', 'EXT_SOURCE_3', 'DAYS_BIRTH',

'EXT_SOURCE_1^2', 'EXT_SOURCE_1 EXT_SOURCE_2',

'EXT_SOURCE_1 EXT_SOURCE_3', 'EXT_SOURCE_1 DAYS_BIRTH',

'EXT_SOURCE_2^2', 'EXT_SOURCE_2 EXT_SOURCE_3',

'EXT_SOURCE_2 DAYS_BIRTH', 'EXT_SOURCE_3^2',

'EXT_SOURCE_3 DAYS_BIRTH', 'DAYS_BIRTH^2'], dtype=object)

-

35개의 feature이 있으며 개별 feature의 차수(degree)는 최대 3까지 높아지며(3차항까지) interaction terms가 생성됨

-

새로운 feature들이 target과 상관 관계가 있는지 확인

### feature들에 대한 DataFrame 생성

poly_features = pd.DataFrame(poly_features,

columns = poly_transformer.get_feature_names_out(['EXT_SOURCE_1', 'EXT_SOURCE_2',

'EXT_SOURCE_3', 'DAYS_BIRTH']))

### Target 값 추가

poly_features['TARGET'] = poly_target

### Target과의 상관도 파악

poly_corrs = poly_features.corr()['TARGET'].sort_values()

### 가장 강한 양의 상관관계/ 음의 상관관계

print(poly_corrs.head(10))

print(poly_corrs.tail(5))

EXT_SOURCE_2 EXT_SOURCE_3 -0.193939 EXT_SOURCE_1 EXT_SOURCE_2 EXT_SOURCE_3 -0.189605 EXT_SOURCE_2 EXT_SOURCE_3 DAYS_BIRTH -0.181283 EXT_SOURCE_2^2 EXT_SOURCE_3 -0.176428 EXT_SOURCE_2 EXT_SOURCE_3^2 -0.172282 EXT_SOURCE_1 EXT_SOURCE_2 -0.166625 EXT_SOURCE_1 EXT_SOURCE_3 -0.164065 EXT_SOURCE_2 -0.160295 EXT_SOURCE_2 DAYS_BIRTH -0.156873 EXT_SOURCE_1 EXT_SOURCE_2^2 -0.156867 Name: TARGET, dtype: float64 DAYS_BIRTH -0.078239 DAYS_BIRTH^2 -0.076672 DAYS_BIRTH^3 -0.074273 TARGET 1.000000 1 NaN Name: TARGET, dtype: float64

-

새로운 변수 중 일부는 원래 feature보다 target과 더 큰(절대값) 상관 관계가 있음

-

상관도가 더 높아짐

-

머신러닝 모델을 구축할 때 이러한 feature이 있는 경우와 없는 경우를 비교하여 다항 변수들이 모델 학습에 실제로 도움이 되는지 확인 가능

-

-

이러한 feature을 train, test 데이터 복사본에 추가한 다음, 다항 변수들이 있는 모델과 없는 모델을 각각 평가

- 일단 해봐야 안다,,

### 데이터 프레임에 test data 삽입

poly_features_test = pd.DataFrame(poly_features_test,

columns = poly_transformer.get_feature_names_out(['EXT_SOURCE_1', 'EXT_SOURCE_2',

'EXT_SOURCE_3', 'DAYS_BIRTH']))

### 학습용 df에 다항 변수 병합

poly_features['SK_ID_CURR'] = app_train['SK_ID_CURR']

app_train_poly = app_train.merge(poly_features, on = 'SK_ID_CURR', how = 'left')

### 평가용 df에 다항 변수 병합

poly_features_test['SK_ID_CURR'] = app_test['SK_ID_CURR']

app_test_poly = app_test.merge(poly_features_test, on = 'SK_ID_CURR', how = 'left')

### df 정렬

app_train_poly, app_test_poly = app_train_poly.align(app_test_poly, join = 'inner', axis = 1)

### 새로 생성된 df의 형태 출력

print('Training data with polynomial features shape: ', app_train_poly.shape)

print('Testing data with polynomial features shape: ', app_test_poly.shape)

Training data with polynomial features shape: (307511, 275) Testing data with polynomial features shape: (48744, 275)

3-2. Domain Knowledge Features

-

신용 전문가가 아니기 때문에 도메인 지식이라고 부르는 것이 옳지 않을 수도 있지만, 이것을 제한된 금융 지식을 적용하려는 시도라고 부를 수 있음

-

따라서, 고객의 채무 불이행 여부를 알려주는 데 중요하다고 생각하는 것을 담아내는 몇 가지 feature들을 생성할 수 있음

-

CREDIT_INCOME_PERCENT: 고객의 수입에 대한 신용 금액의 백분율

-

ANNUITY_INCOME_PERCENT: 고객의 소득에 대한 연금 대출의 비율

-

CREDIT_TERM: 지불 기간(월 단위로 지불해야 하는 기간, 연금은 매월 지불해야 하는 금액이므로)

-

DAYS_EMPLOYED_PERCENT: 고객의 나이에 대한 고용 일수의 백분율

-

-

### 도메인 지식을 기반으로 여러 변수들을 생성

app_train_domain = app_train.copy()

app_test_domain = app_test.copy()

app_train_domain['CREDIT_INCOME_PERCENT'] = app_train_domain['AMT_CREDIT'] / app_train_domain['AMT_INCOME_TOTAL']

app_train_domain['ANNUITY_INCOME_PERCENT'] = app_train_domain['AMT_ANNUITY'] / app_train_domain['AMT_INCOME_TOTAL']

app_train_domain['CREDIT_TERM'] = app_train_domain['AMT_ANNUITY'] / app_train_domain['AMT_CREDIT']

app_train_domain['DAYS_EMPLOYED_PERCENT'] = app_train_domain['DAYS_EMPLOYED'] / app_train_domain['DAYS_BIRTH']

app_test_domain['CREDIT_INCOME_PERCENT'] = app_test_domain['AMT_CREDIT'] / app_test_domain['AMT_INCOME_TOTAL']

app_test_domain['ANNUITY_INCOME_PERCENT'] = app_test_domain['AMT_ANNUITY'] / app_test_domain['AMT_INCOME_TOTAL']

app_test_domain['CREDIT_TERM'] = app_test_domain['AMT_ANNUITY'] / app_test_domain['AMT_CREDIT']

app_test_domain['DAYS_EMPLOYED_PERCENT'] = app_test_domain['DAYS_EMPLOYED'] / app_test_domain['DAYS_BIRTH']

⏺ 새로운 변수들을 시각화

-

도메인 지식 변수들을 그래프에서 시각적으로 탐구

-

Target값에 따라 색을 다르게 적용한 kdeplot으로 시각화

plt.figure(figsize = (12, 20))

### 새로 생성한 feature들을 순환시키며

for i, feature in enumerate(['CREDIT_INCOME_PERCENT', 'ANNUITY_INCOME_PERCENT', 'CREDIT_TERM', 'DAYS_EMPLOYED_PERCENT']):

# 각 변수들에 대해 subplot 생성

plt.subplot(4, 1, i + 1)

# 제때 상환된 대출

sns.kdeplot(app_train_domain.loc[app_train_domain['TARGET'] == 0, feature], label = 'target == 0')

# 제때 상환되지 못한 대출

sns.kdeplot(app_train_domain.loc[app_train_domain['TARGET'] == 1, feature], label = 'target == 1')

# Plot Labeling

plt.title('Distribution of %s by Target Value' % feature)

plt.xlabel('%s' % feature); plt.ylabel('Density');

plt.tight_layout(h_pad = 2.5)

-

위의 그래프들을 보면 새로 만든 feature들이 유용한지 불확실함

- 따라서, 그냥 이 feature들을 가지고 모델의 성능을 확인해봅니다.(Just Do it)

(+) 개인적인 생각

-

feature들의 왜곡 정도가 너무 심함(skewed data)

- 회귀 문제에서는 오히려 모델이 overfitting되는 문제를 초래할 수 있음

4. Baseline 모델들

-

단순한 baseline 모델의 경우 test set의 모든 예제에 대해 동일한 값을 추측할 수 있음

- 대출금을 상환하지 않을 확률(target = 1)을 예측하라는 요청을 받았기 때문에, 완전히 불확실한 경우 검정 세트의 모든 관측치에 대해 0.5를 추측할 수 있음(

그냥 절반으로 찍어버리는거지)

- 대출금을 상환하지 않을 확률(target = 1)을 예측하라는 요청을 받았기 때문에, 완전히 불확실한 경우 검정 세트의 모든 관측치에 대해 0.5를 추측할 수 있음(

-

실제 baseline에 비해 조금 더 정교한 모델인 LogisticRegression을 활용

4-1. Logistic Regression

-

Baseline을 얻기 위해 범주형 변수를 인코딩 한 후 모든 feature를 활용

- 결측치를 채우고 feature들의 범위를 정규화(normalize)하여 전처리 => Feature Scaling

from sklearn.preprocessing import MinMaxScaler

from sklearn.impute import SimpleImputer

### 일단 train data에서 target값을 제거

if 'TARGET' in app_train:

train = app_train.drop(columns = ['TARGET'])

else:

train = app_train.copy()

### 변수 목록

features = list(train.columns)

### test data 복사

test = app_test.copy()

### 결측치: 중간값으로 대체

imputer = SimpleImputer(strategy = 'median')

### 데이터 범위를 0 ~ 1로 조정

scaler = MinMaxScaler(feature_range = (0, 1))

### 결측치 처리

imputer.fit(train) # imputer를 학습 데이터로 학습시키고

train = imputer.transform(train) # 변형!

test = imputer.transform(app_test) # train data를 변형시킨 imputer를 가지고 "그대로" 변형

### Scaling

scaler.fit(train)

train = scaler.transform(train)

test = scaler.transform(test)

print('Training data shape: ', train.shape)

print('Testing data shape: ', test.shape)

Training data shape: (307511, 240) Testing data shape: (48744, 240)

-

우리는 첫 번째 모델에 대해 Scikit-Learn의 ```LogisticRegression``을 사용할 것임

-

기본 모델에서 유일하게 변경할 하이퍼파라미터는 overfitting의 양을 제어하는 정규화 매개변수인 C를 낮추는 것임

- 낮은 값은 과적합을 줄임

### 로지스틱 회귀 적용

from sklearn.linear_model import LogisticRegression

# 모델 객체 생성

log_reg = LogisticRegression(C = 0.0001)

# model fitting(학습)

log_reg.fit(train, train_labels)

LogisticRegression(C=0.0001)In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook.

On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

LogisticRegression(C=0.0001)

-

이제 모델이 학습되었으므로 예측을 수행할 수 있음

-

predict_proba()메소드를 통해 대출을 갚지 않을(target = 1) 확률을 예측-

첫 번째 열은 대상이 0일 확률이고, 두 번째 열은 대상이 1일 확률임

-

단일 행의 경우 두 열의 합이 1이어야 함

-

대출이 상환되지 않을(target = 1) 확률을 원하므로 두 번째 열을 선택

-

### 예측

# 대출을 상환하지 않을 확률만 선택

log_reg_pred = log_reg.predict_proba(test)[:, 1]

### 제출용 DataFrame

submit = app_test[['SK_ID_CURR']]

submit['TARGET'] = log_reg_pred

submit.head()

| SK_ID_CURR | TARGET | |

|---|---|---|

| 0 | 100001 | 0.078515 |

| 1 | 100005 | 0.137926 |

| 2 | 100013 | 0.082194 |

| 3 | 100028 | 0.080921 |

| 4 | 100038 | 0.132618 |

-

예측은 대출이 상환되지 않을(target = 1) 확률이 0과 1 사이임을 나타냄

- 대출이 위험하다는 것을 결정하기 위한 확률 임계값을 설정할 수 있음

# Save the submission to a csv file

submit.to_csv('log_reg_baseline.csv', index = False)

- 점수는 약 0.671

4-2. Improved Model: Random Forest

-

동일한 train 데이터에서 RandomForest를 사용하여 성능을 향상시켜보자.

-

랜덤 포레스트는 특히 우리가 수백 개의 트리를 사용할 때 훨씬 더 강력한 모델임

-

n_estimators = 100으로 설정

from sklearn.ensemble import RandomForestClassifier

### RandomForest 객체 생성

random_forest = RandomForestClassifier(n_estimators = 100, random_state = 50,

verbose = 1, n_jobs = -1)

### 모델 학습

random_forest.fit(train, train_labels)

### 피처 중요도 확인

feature_importance_values = random_forest.feature_importances_

feature_importances = pd.DataFrame({'feature': features, 'importance': feature_importance_values})

### test data를 이용해 예측 수행

predictions = random_forest.predict_proba(test)[:, 1]

[Parallel(n_jobs=-1)]: Using backend ThreadingBackend with 2 concurrent workers. [Parallel(n_jobs=-1)]: Done 46 tasks | elapsed: 1.7min [Parallel(n_jobs=-1)]: Done 100 out of 100 | elapsed: 3.5min finished [Parallel(n_jobs=2)]: Using backend ThreadingBackend with 2 concurrent workers. [Parallel(n_jobs=2)]: Done 46 tasks | elapsed: 0.8s [Parallel(n_jobs=2)]: Done 100 out of 100 | elapsed: 2.3s finished

### 대회 제출용 코드

submit = app_test[['SK_ID_CURR']]

submit['TARGET'] = predictions

submit.to_csv('random_forest_baseline.csv', index = False)

- 점수는 약 0.678

- Engineered Features로 예측하기

-

Polynomial Features 및 도메인 지식이 모델을 개선했는지 확인하는 유일한 방법은 이러한 feature에 대한 테스트 모델을 직접 훈련시키는 방법밖에 없름

- 이러한 feature들이 없는 모델의 performance와 비교하여 feature engineering의 효과를 측정

⏺Testing polynomial features

poly_features_names = list(app_train_poly.columns) # Polynomial feature들의 목록

### 결측치가 존재하는 경우 중앙값으로 대체

imputer = SimpleImputer(strategy = 'median')

poly_features = imputer.fit_transform(app_train_poly)

poly_features_test = imputer.transform(app_test_poly)

### Scaling

scaler = MinMaxScaler(feature_range = (0, 1))

poly_features = scaler.fit_transform(poly_features)

poly_features_test = scaler.transform(poly_features_test)

### 모델 객체 생성

random_forest_poly = RandomForestClassifier(n_estimators = 100, random_state = 50,

verbose = 1, n_jobs = -1)

### 모델 학습

random_forest_poly.fit(poly_features, train_labels)

### 예측

predictions = random_forest_poly.predict_proba(poly_features_test)[:, 1]

[Parallel(n_jobs=-1)]: Using backend ThreadingBackend with 2 concurrent workers. [Parallel(n_jobs=-1)]: Done 46 tasks | elapsed: 2.2min [Parallel(n_jobs=-1)]: Done 100 out of 100 | elapsed: 4.9min finished [Parallel(n_jobs=2)]: Using backend ThreadingBackend with 2 concurrent workers. [Parallel(n_jobs=2)]: Done 46 tasks | elapsed: 0.5s [Parallel(n_jobs=2)]: Done 100 out of 100 | elapsed: 1.0s finished

### 제출용 코드

submit = app_test[['SK_ID_CURR']]

submit['TARGET'] = predictions

submit.to_csv('random_forest_baseline_engineered.csv', index = False)

-

점수: 약 0.678

- 다항 변수들을 적용시키 않았을 때와 성능이 동일

⏺ Testing domain features

# 일단 target을 빼고 데이터 가공

app_train_domain = app_train_domain.drop(columns = 'TARGET')

domain_features_names = list(app_train_domain.columns)

### 결측치가 존재하는 경우 "중앙값"으로 대체

imputer = SimpleImputer(strategy = 'median')

domain_features = imputer.fit_transform(app_train_domain)

domain_features_test = imputer.transform(app_test_domain)

### Scaling

scaler = MinMaxScaler(feature_range = (0, 1))

domain_features = scaler.fit_transform(domain_features)

domain_features_test = scaler.transform(domain_features_test)

### 모델 객체 생성

random_forest_domain = RandomForestClassifier(n_estimators = 100, random_state = 50,

verbose = 1, n_jobs = -1)

### 모델 학습

random_forest_domain.fit(domain_features, train_labels)

### feature 중요도 확인

feature_importance_values_domain = random_forest_domain.feature_importances_

feature_importances_domain = pd.DataFrame({'feature': domain_features_names,

'importance': feature_importance_values_domain})

### 예측

predictions = random_forest_domain.predict_proba(domain_features_test)[:, 1]

[Parallel(n_jobs=-1)]: Using backend ThreadingBackend with 2 concurrent workers. [Parallel(n_jobs=-1)]: Done 46 tasks | elapsed: 1.4min [Parallel(n_jobs=-1)]: Done 100 out of 100 | elapsed: 3.1min finished [Parallel(n_jobs=2)]: Using backend ThreadingBackend with 2 concurrent workers. [Parallel(n_jobs=2)]: Done 46 tasks | elapsed: 1.3s [Parallel(n_jobs=2)]: Done 100 out of 100 | elapsed: 2.9s finished

### 제출용 코드

submit = app_test[['SK_ID_CURR']]

submit['TARGET'] = predictions

submit.to_csv('random_forest_baseline_domain.csv', index = False)

-

제출했을 때 0.679점을 얻었음

- 가공된 feature들이 해당 모델에서는 도움이 되지 않는다는 것을 의미

4-3.변수 중요도(Feature Importances)

-

어떤 변수가 가장 관련이 있는지 확인하는 간단한 방법으로 RandomForest의 feature importances를 확인하는 방법이 있음

-

Exploratory Data Analysis(EDA)에서 본 상관 관계를 고려할 때 가장 중요한 feature는

EXT_SOURCE및DAYS_BIRTH일 것으로 예상할 수 있음 -

이후 차원 축소 시에 이러한 feature importances를 활용할 수 있음

Feature 중요도 시각화

-

모형에서 반환된 중요도 그림을 표시

- 중요도가 높을수록 feature 중요도의 모든 척도에서 작동할 수 있음

-

Arguments>

-

df(데이터 프레임):

-

피쳐 중요도

-

features컬럼에 변수들이 있고importance컬럼에 중요도가 있음

-

-

-

Return>

- 가장 중요한 15가지 feature들에 대해 시각화

def plot_feature_importances(df):

### 중요도 순서대로 feature들을 정렬

df = df.sort_values('importance', ascending = False).reset_index()

### feature 중요도 정규화(다 합쳐서 1이 되게)

df['importance_normalized'] = df['importance'] / df['importance'].sum()

### 가로 막대 그리기(중요도 ∝ 길이)

plt.figure(figsize = (10, 6))

ax = plt.subplot()

ax.barh(list(reversed(list(df.index[:15]))), # 중요한 것부터 표시해야 하므로 index를 거꾸로

df['importance_normalized'].head(15),

align = 'center', edgecolor = 'k')

### 축 설정

ax.set_yticks(list(reversed(list(df.index[:15]))))

ax.set_yticklabels(df['feature'].head(15))

plt.xlabel('Normalized Importance'); plt.title('Feature Importances')

plt.show()

return df

feature_importances_sorted = plot_feature_importances(feature_importances)

-

예상대로 가장 중요한 feature들은

EXT_SOURCE와DAYS_BIRTH임 -

위의 그래프를 보면 모델에 높은 importance을 지닌 feature들은 소수에 불과한 것을 파악할 수 있음

- 성능 저하 없이 많은 feature들을 삭제할 수 있음을 시사(조금 떨어질 수는 있음)

-

feature importance는 모델을 해석하거나 차원 축소를 하는 가장 정교한 방법은 아니지만, 예측을 할 때 모델이 고려하는 요소를 이해할 수 있게 해줌

feature_importances_domain_sorted = plot_feature_importances(feature_importances_domain)

-

손으로 엔지니어링한 4가지 feature 모두 feature importances 상위 15위 안에 들었음

CREDIT_TERM,ANNUITY_INCOME_PERCENT,CREDIT_INCOME_PERCENT,DAYS_EMPLOYED_PERCENT

-

도메인 지식이 모델 성능에 효과가 있었다.

5. 추가 학습_ Light Gradient Boosting Machine

-

Gradient Boosting Machine은 현재 구조화된 데이터 세트에 대한 학습을 위한 선도적인 모델임

(특히 Kaggle)

-

교차 검증(Cross Validation)을 사용하여 LGBM 모델을 train/test

-

Parameters>

-

features(pd.DataFrame):

-

학습에 사용할 training features

-

반드시

target컬럼을 포함해야 함

-

-

test_features(pd.DataFrame):

- 모형을 사용하여 예측하는 데 사용할 검증용 데이터

-

encoding(str, default = ‘ohe’):

-

변수를 인코딩하는 방법

-

one-hot encoding(-> 범주형 변수)인 경우

ohe, label encoding(-> 숫자형 변수)인 경우le

-

-

n_folds(int, default = 5):

- 교차 검증에 사용할 folds 수

-

-

Return>

-

submission(pd.DataFrame):

- 모델에 의해 예측된

SK_ID_CURR값과TARGET확률을 가진 df

- 모델에 의해 예측된

-

feature_importances(pd.DataFrame):

- 모델에서 feature들의 중요도에 대한 정보를 포함하고 있는 df

-

valid_metrics(pd.DataFrame):

- 각 fold와 전체적인 부분에서의 training과 validation 점수(ROC-AUC)가 저장됨

-

from sklearn.model_selection import KFold

from sklearn.metrics import roc_auc_score

import lightgbm as lgb

import gc

def model(features, test_features, encoding = 'ohe', n_folds = 5):

### 데이터 준비

# id 추출

train_ids = features['SK_ID_CURR']

test_ids = test_features['SK_ID_CURR']

# label 추출 -> for training

labels = features['TARGET']

# id와 target 제거

features = features.drop(columns = ['SK_ID_CURR', 'TARGET'])

test_features = test_features.drop(columns = ['SK_ID_CURR'])

# --------------------------------------------------------------

### Encoding

## 범주형 변수: One-Hot Encoding

if encoding == 'ohe':

features = pd.get_dummies(features)

test_features = pd.get_dummies(test_features)

# 데이터 프레임의 column들을 통일시키기

features, test_features = features.align(test_features, join = 'inner', axis = 1)

# 따로 기록할 범주형 인덱스는 x

cat_indices = 'auto'

## 수치형 변수: Label Encoding

elif encoding == 'le':

# encoder 객체 생성

label_encoder = LabelEncoder()

# 범주형 변수들을 저장하기 위한 list

cat_indices = []

# 각 컬럼마다 반복하며

for i, col in enumerate(features):

if features[col].dtype == 'object': # 범주형 변수

# 범주형 변수를 정수(integer)로 매핑

features[col] = label_encoder.fit_transform(np.array(features[col].astype(str)).reshape((-1,)))

test_features[col] = label_encoder.transform(np.array(test_features[col].astype(str)).reshape((-1,)))

# 범주형 변수들을 저장

cat_indices.append(i)

## 유효하지 않은 인코딩 방식 입력 시 오류

else:

raise ValueError("Encoding must be either 'ohe' or 'le'")

# --------------------------------------------------------------------------

### 가공된 데이터 형태 확인하기

print('Training Data Shape: ', features.shape)

print('Testing Data Shape: ', test_features.shape)

### feature 이름 추출

feature_names = list(features.columns)

### np array로 변환

features = np.array(features)

test_features = np.array(test_features)

# -------------------------------------------------------------------------

### 교차 검증

# KFold 객체 생성

k_fold = KFold(n_splits = n_folds, shuffle = True, random_state = 50)

# feature 중요도를 저장할 빈 array 생성

feature_importance_values = np.zeros(len(feature_names))

# 예측값을 저장하기 위한 빈 array 생성

test_predictions = np.zeros(test_features.shape[0])

# 표본 외(out of fold) 검증 데이터 예측을 위한 arrau

out_of_fold = np.zeros(features.shape[0])

# --------------------------------------------------------------------------

### Modeling

# 검증/ 학습 수행 후 점수를 저장하기 위한 리스트

valid_scores = []

train_scores = []

# 각 fold를 반복하며

for train_indices, valid_indices in k_fold.split(features):

## 데이터 준비

# fold에서의 훈련 데이터

train_features, train_labels = features[train_indices], labels[train_indices]

# fold에서의 검증 데이터

valid_features, valid_labels = features[valid_indices], labels[valid_indices]

## 모델 객체 생성

model = lgb.LGBMClassifier(n_estimators = 10000, objective = 'binary',

class_weight = 'balanced', learning_rate = 0.05,

reg_alpha = 0.1, reg_lambda = 0.1,

subsample = 0.8, n_jobs = -1, random_state = 50)

## 모델 학습

model.fit(train_features, train_labels, eval_metric = 'auc',

eval_set = [(valid_features, valid_labels), (train_features, train_labels)],

eval_names = ['valid', 'train'], categorical_feature = cat_indices,

early_stopping_rounds = 100, verbose = 200)

# 가장 성능이 좋은 iteration 기록

best_iteration = model.best_iteration_

# 피처 중요도 기록

feature_importance_values += model.feature_importances_ / k_fold.n_splits

## 예측

test_predictions += model.predict_proba(test_features, num_iteration = best_iteration)[:, 1] / k_fold.n_splits

# 표본/예측 저장

out_of_fold[valid_indices] = model.predict_proba(valid_features, num_iteration = best_iteration)[:, 1]

# 가장 좋은 점수 기록

valid_score = model.best_score_['valid']['auc']

train_score = model.best_score_['train']['auc']

valid_scores.append(valid_score)

train_scores.append(train_score)

## 메모리 초기화

gc.enable()

del model, train_features, valid_features

gc.collect()

# --------------------------------------------------------------------------

### 제출용 파일 만들기

submission = pd.DataFrame({'SK_ID_CURR': test_ids, 'TARGET': test_predictions})

### 피처 중요도 dataframe

feature_importances = pd.DataFrame({'feature': feature_names, 'importance': feature_importance_values})

### 전체 데이터의 validation score 측정

valid_auc = roc_auc_score(labels, out_of_fold)

### 전체 데이터에 대한 score를 metric에 추가

valid_scores.append(valid_auc)

train_scores.append(np.mean(train_scores))

### validation score를 위한 데이터프레임 생성

fold_names = list(range(n_folds))

fold_names.append('overall')

### training 및 validation score가 저장된 df

metrics = pd.DataFrame({'fold': fold_names,

'train': train_scores,

'valid': valid_scores})

return submission, feature_importances, metrics

⏺ 기본 데이터로 학습

### 성능 평가

submission, fi, metrics = model(app_train, app_test)

print('Baseline metrics')

print(metrics)

Training Data Shape: (307511, 239)

Testing Data Shape: (48744, 239)

[200] train's auc: 0.798723 train's binary_logloss: 0.547797 valid's auc: 0.755039 valid's binary_logloss: 0.563266

[400] train's auc: 0.82838 train's binary_logloss: 0.518334 valid's auc: 0.755107 valid's binary_logloss: 0.545575

[200] train's auc: 0.798409 train's binary_logloss: 0.548179 valid's auc: 0.758332 valid's binary_logloss: 0.563587

[400] train's auc: 0.828244 train's binary_logloss: 0.518308 valid's auc: 0.758563 valid's binary_logloss: 0.545588

[200] train's auc: 0.797648 train's binary_logloss: 0.549331 valid's auc: 0.763246 valid's binary_logloss: 0.564236

[200] train's auc: 0.798855 train's binary_logloss: 0.547952 valid's auc: 0.757131 valid's binary_logloss: 0.562234

[200] train's auc: 0.797918 train's binary_logloss: 0.548584 valid's auc: 0.758065 valid's binary_logloss: 0.564721

Baseline metrics

fold train valid

0 0 0.816657 0.755215

1 1 0.816900 0.758754

2 2 0.808111 0.763630

3 3 0.811887 0.757583

4 4 0.811617 0.758344

5 overall 0.813034 0.758705

### feature 중요도 확인

fi_sorted = plot_feature_importances(fi)

### 제출 파일 생성

submission.to_csv('baseline_lgb.csv', index = False)

- 점수는 약 0.735

⏺ Domain Feature들을 추가

### 성능 평가

app_train_domain['TARGET'] = train_labels

# Test the domain knolwedge features

submission_domain, fi_domain, metrics_domain = model(app_train_domain, app_test_domain)

print('Baseline with domain knowledge features metrics')

print(metrics_domain)

Training Data Shape: (307511, 243)

Testing Data Shape: (48744, 243)

[200] train's auc: 0.804779 train's binary_logloss: 0.541283 valid's auc: 0.762511 valid's binary_logloss: 0.557227

[200] train's auc: 0.804016 train's binary_logloss: 0.542318 valid's auc: 0.765768 valid's binary_logloss: 0.557819

[200] train's auc: 0.8038 train's binary_logloss: 0.542856 valid's auc: 0.7703 valid's binary_logloss: 0.557925

[400] train's auc: 0.834559 train's binary_logloss: 0.511454 valid's auc: 0.770511 valid's binary_logloss: 0.538558

[200] train's auc: 0.804603 train's binary_logloss: 0.541718 valid's auc: 0.765497 valid's binary_logloss: 0.556274

[200] train's auc: 0.804782 train's binary_logloss: 0.541397 valid's auc: 0.765076 valid's binary_logloss: 0.558641

Baseline with domain knowledge features metrics

fold train valid

0 0 0.815523 0.763069

1 1 0.807075 0.766062

2 2 0.832138 0.770730

3 3 0.811100 0.765884

4 4 0.819404 0.765249

5 overall 0.817048 0.766186

### 피쳐 중요도

fi_sorted = plot_feature_importances(fi_domain)

-

우리가 만든 feature들 중 일부가 중요한 변수로 채택되었음을 확인할 수 있음

- 추가적인 다른 도메인 지식을 적용하는 것을 고려해 볼 수 있음

submission_domain.to_csv('baseline_lgb_domain_features.csv', index = False)

- 점수는 약 0.754 정도

6. Conclusions

6-1. 모델별 성능 비교

- feature engineering 적용 x

-

LogisticRegressor: 0.671

-

RandomForest: 0.678

-

LGBM: 0.735

- feature engineering 적용 O

-

RandomForest: 0.678(다향 변수), 0.679(도메인 feature)

- 별다른 성능 개선이 없었음

-

LGBM: 0.754

- 약간의 성능 개선이 있었음

6-2. 전체적인 프로세스 정리

-

먼저 데이터, 여러가지 작업, 제출물을 판단하는 기준을 확실히 이해

-

모델링에 도움이 될 수 있는 관계, 추세 또는 이상치를 식별하기 위해 간단한 EDA를 수행

- 그 과정에서 범주형 변수 인코딩, 결측값 대치, 범주형 변수 인코딩 같은 필요한 전처리 단계를 수행

-

기존 데이터에서 새로운 feature들을 구성하여 그렇게 하는 것이 모델에 도움이 될 수 있는지 확인

-

데이터 탐색, 데이터 준비 및 feature engineering을 완료

-

모델 구현

-

LogisticRegression, RandomForest

-

Engineered된 변수 학습 -> 성능 체크

📌 머신러닝 프로젝트의 일반적인 개요

-

문제와 데이터 이해

-

데이터 정리 및 formatting(정보 구성)

- 해당 대회의 경우 대부분 수행되어 있었음

-

EDA

-

기본 모델 생성 & 학습 & 예측

-

모델 개선

-

결과 해석